AI in Mental Health: Clinical Decision Support, Applications, and Real-World Lessons

How AI improves clinical decision support in mental health, covering risk prediction, monitoring, and implementation limits.

February 18, 2026

9 minutes read

Introduction

Mental health services face growing pressure from high caseloads, fragmented records, limited specialist capacity, and inconsistent documentation. Clinicians often make complex decisions in brief appointments, relying on incomplete patient histories scattered across systems. As a result, care decisions can depend on partial information, reducing consistency across providers.

To address this, clinical decision support systems are increasingly integrating artificial intelligence. In mental health care, AI structures patient data and surfaces patterns that manual review may overlook. Its purpose is not to replace clinical judgment, but to strengthen clarity, consistency, and prioritization in demanding workflows.

AI functions as a structured decision-support layer, analyzing histories and generating signals to guide assessment and treatment planning. Clinicians remain responsible for interpreting those signals within each patient’s broader clinical context.

This article examines where AI strengthens mental health clinical decision support, where practical limits persist, and what real-world deployment reveals about responsible implementation.

AI-Enabled Clinical Decision Support in Mental Health

AI-enabled clinical decision support assists clinicians during assessment and treatment planning by providing structured input at key decision points. In mental health care, this includes risk indicators, prioritization tools, and treatment guidance that organize complex information within existing workflows. These systems support more consistent and transparent decisions without replacing clinical judgment.

AI enhances these systems by analyzing patient histories and clinical notes to identify patterns and track symptom progression. In high-caseload settings, this improves visibility and consistency in decision-making. However, structural factors limit performance. Evolving diagnostic categories, inconsistent documentation, and incomplete outcome tracking reduce the reliability of outputs.

Organizations must understand these constraints before applying AI. Decision support delivers value not only through technical capability but through effective integration into clinical workflows.

Applications of AI in Mental Health Clinical Decision Support

In practice, AI-enabled clinical decision support varies by the type of decision. Its impact depends on data quality and workflow integration. Some applications deliver measurable gains, while others expose practical limits. The following sections examine four key decision areas and their benefits and challenges.

Risk Stratification & Crisis Prediction

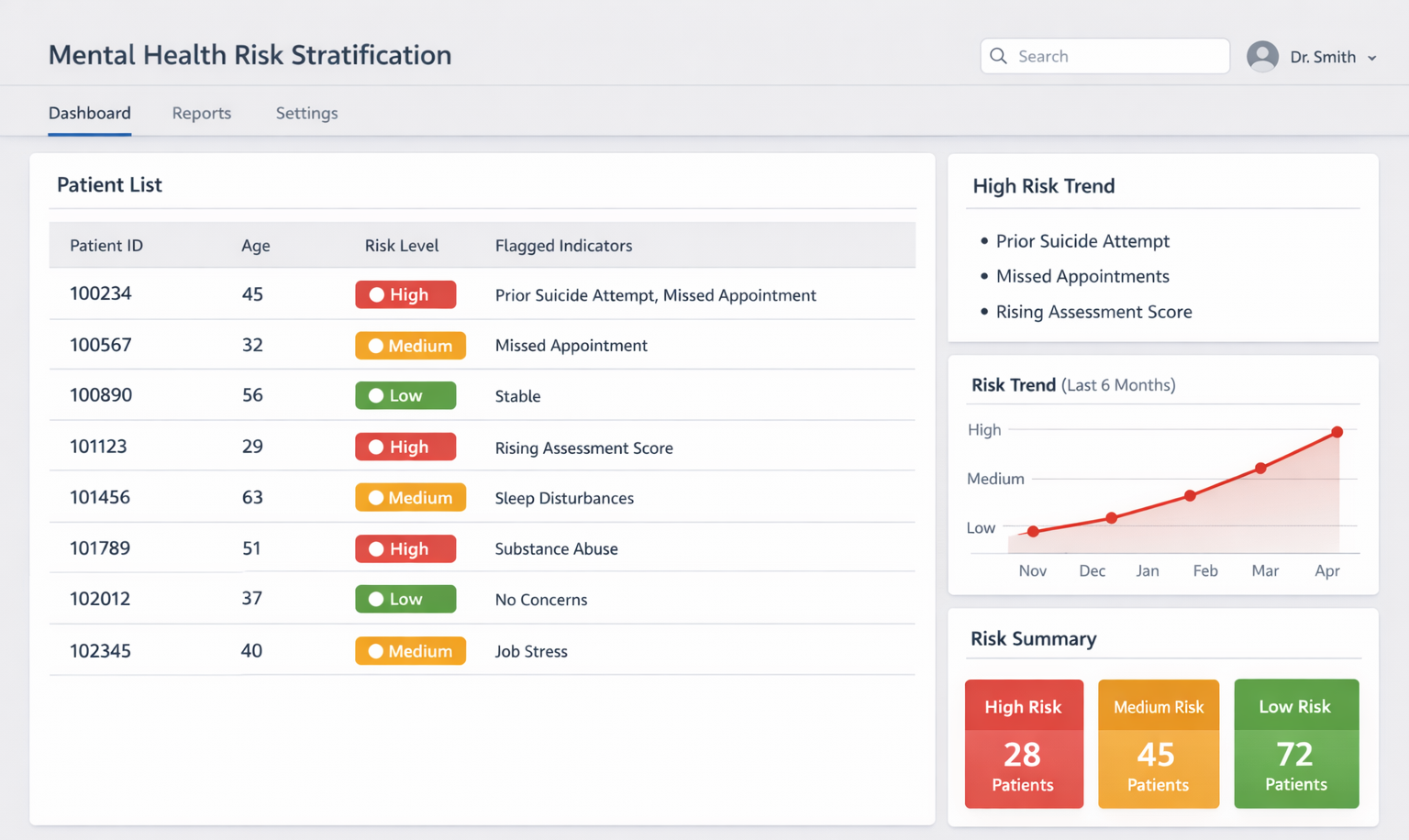

Illustrative example of a clinical risk stratification dashboard supporting prioritized intervention. Image Source: AI-generated.

Risk stratification is among the most established applications of AI in mental health decision support. These systems analyze patient histories, prior admissions, symptom patterns, and service utilization to estimate the likelihood of suicide attempts, relapse, or crisis escalation. The output is not a diagnosis, but a structured risk signal that helps clinicians prioritize attention across complex caseloads.

By flagging higher-risk cases, teams can focus on monitoring and intervening earlier. This helps teams allocate limited resources more deliberately and improves prioritization consistency, especially in high-volume settings.

However, reliability depends heavily on data quality. Suicide attempts and relapse events are not always recorded in structured formats, and key details may remain in narrative notes. When data is incomplete or inconsistent, prediction reliability declines and alert burden increases. These systems support prioritization, but clinician trust depends on clear thresholds and structured human review.

Treatment Pathway & Intervention Support

Example of treatment pathway decision support based on historical response patterns. Image Source: AI-generated.

AI supports treatment planning by analyzing how patients respond to interventions over time. These systems review historical records to identify response patterns, compare therapy trajectories, and group medication outcomes. The goal is not to automate prescriptions, but to provide structured reference points that help clinicians weigh options based on documented experience from similar patients.

This structured comparison improves consistency and transparency in treatment discussions, especially in complex or high-volume settings.

Reliability depends on data consistency. Longitudinal records often fragment when patients change providers or discontinue care, and documentation standards vary across systems. These gaps weaken pattern detection and limit transferability. Inconsistent data can produce unstable guidance. Clear explanations and workflow alignment improve adoption.

Intake Assessment & Prioritization

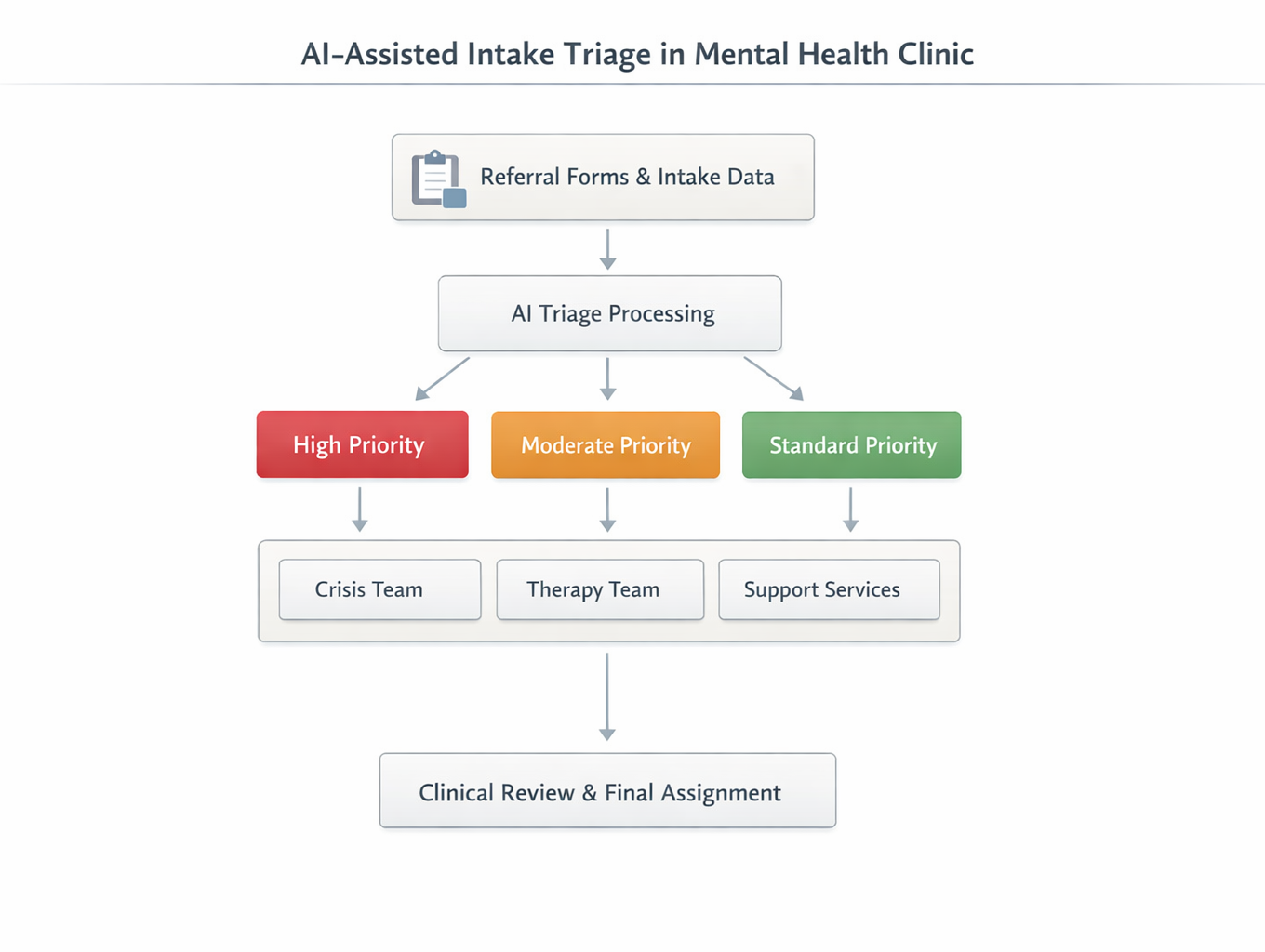

Workflow representation of AI-assisted intake triage with human oversight. Image Source: AI-Generated

Organizations use AI to support intake prioritization by structuring referral and early patient data. Natural language processing analyzes intake forms, referral notes, and assessments to extract symptom indicators. The system converts these inputs into severity scores or routing categories to help teams decide which cases require urgent attention and which can follow standard pathways.

This standardized approach reduces bottlenecks and improves consistency in assigning patients to appropriate providers or service levels, especially in high-demand settings.

Deployment can create workflow friction. Poorly aligned thresholds may generate excessive alerts and increase reviewer fatigue. Structured scoring may miss complex social or cultural contexts, leading to misclassification. Integration can disrupt review processes if teams do not align outputs with existing workflows. Clear explanations and gradual rollout improve adoption.

Longitudinal Monitoring & Pattern Detection

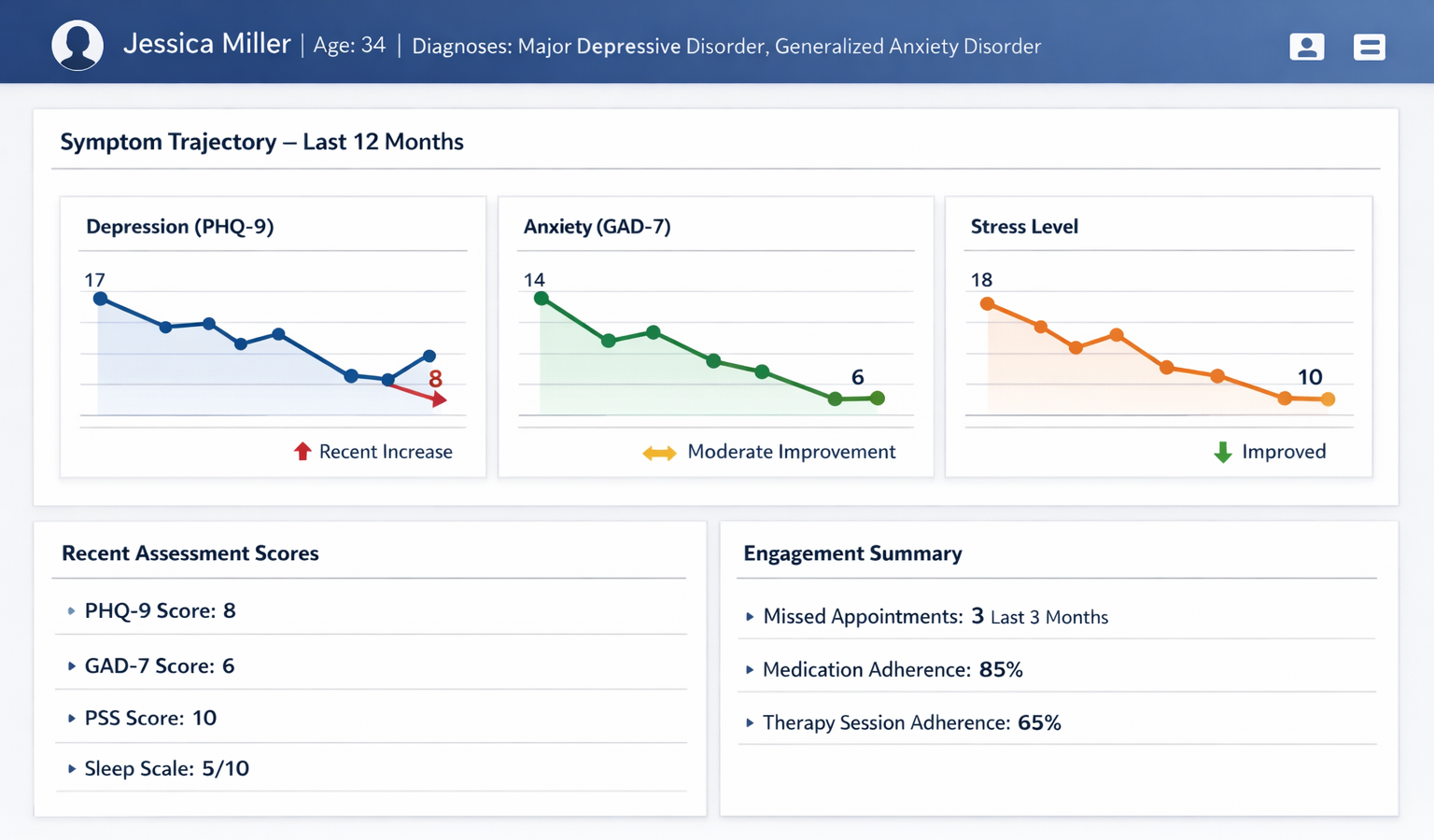

Longitudinal monitoring dashboard showing symptom trends and engagement indicators. Image Source: AI-generated.

Longitudinal monitoring dashboard showing symptom trends and engagement indicators. Image Source: AI-generated.

AI-enabled decision support strengthens longitudinal monitoring by tracking symptom progression and flagging early signs of deterioration. These systems analyze repeated assessments, clinical notes, and engagement patterns to identify meaningful shifts in patient stability. Rather than relying on single-point predictions, they surface trends through structured dashboards that support ongoing clinical review.

This longitudinal view gives teams clearer insight into trajectory changes and supports more informed decisions in complex cases.

System reliability depends on consistent data. Symptom measures are not always recorded in structured formats, and documentation varies across providers. Free-text notes often lack standardised tracking fields. Changes in assessment tools or documentation standards introduce data drift and reduce stability. Pattern detection depends more on data consistency than on algorithm complexity.

Structural Limits in Mental Health AI Deployment

Across deployments, the limits of AI in mental health are rarely algorithmic alone. They are structural. Data discipline, population representation, workflow design, and regulatory clarity shape model performance. The most consistent constraints include:

- Unstable diagnostic labels. Psychiatric diagnoses can change over time, weakening the reliability of training data.

- Sparse or delayed outcome validation. Suicide attempts, relapse events, and treatment outcomes are often under-recorded or documented retrospectively.

- Population bias. Training datasets may reflect regional, institutional, or socioeconomic patterns, limiting the extent to which models generalize across populations.

- Documentation variability. Inconsistent record-keeping reduces signal stability, particularly in longitudinal systems.

- Alert fatigue and override behavior. Poor threshold alignment and unclear outputs reduce trust and increase the need for manual overrides.

- Workflow friction. EHR integration and established review processes introduce technical and behavioral resistance.

- Regulatory ambiguity. Classification standards and accountability boundaries remain unclear in many jurisdictions.

Operational maturity, including governance, data standardization, and integration discipline, ultimately determines impact more than model sophistication.

Lessons from Omdena’s Mental Health AI Projects

Project A: Mental Health and Well-being Support in Bhutan

Mental health services in Bhutan faced limited specialist capacity, fragmented documentation, and delayed identification of psychological distress. Screening varied across regions, and referrals often relied on subjective judgment instead of structured tools. In collaboration with the GovTech Agency of Bhutan, the initiative developed an AI-assisted screening framework to standardize assessment and referral.

The team applied supervised classification to structured symptom inputs and contextual indicators from community assessments. When free-text responses were available, they used basic natural language processing to extract relevant signals. The system translated these inputs into calibrated risk tiers to guide referral prioritization rather than automated diagnoses.

During pilot deployment, the framework improved screening structure and clarified prioritization. However, unstable diagnostic labels and limited longitudinal outcome data constrained reliability. The project showed that locally aligned feature design and disciplined validation mattered more than model complexity.

Project B: Real-Time Youth Mental Health Monitoring Using AI and NLP

Organizations monitoring youth mental health signals online struggled with the scale and variability of unstructured text. Manual review could not keep pace with message volume, and evolving language made it difficult to distinguish real distress signals from noise. In collaboration with Kids Help Phone, the initiative developed an NLP-based framework to structure youth-generated text into prioritized review indicators.

The system combined text preprocessing, sentiment modeling, and supervised classification trained on annotated examples. Feature extraction focused on semantic patterns rather than keywords to reduce false positives. Outputs were delivered via dashboards to support human review rather than automated intervention.

The framework improved the efficiency of signal organization and prioritization; however, limited ground-truth data and sensitivity to threshold settings constrained stability. As in the Bhutan project, structural factors shaped reliability more than algorithm choice, and human oversight remained essential for sustainable impact.

Considerations for Organizations Evaluating AI Clinical Decision Support

Organizations evaluating AI-enabled clinical decision support, including many emerging mental health startups, should prioritize operational discipline over model selection. In mental health care, data readiness, workflow integration, and governance determine feasibility more than model sophistication. The following considerations reduce implementation risk:

- Define a specific decision problem first. Identify a narrow, high-friction decision point such as risk prioritization, intake routing, or longitudinal monitoring.

- Audit and standardize data before modeling. Assess label stability, outcome completeness, and documentation consistency. Model refinement cannot correct weak data foundations.

- Pilot with limited scope. Deploy within a defined workflow segment before scaling. Early calibration prevents wider disruption.

- Build oversight into system architecture. Embed structured human review checkpoints and make override behavior visible and measurable.

- Measure adoption and trust, not just accuracy: Track clinician engagement, override rates, and workflow impact alongside performance metrics.

Across domains, AI improves structure and visibility more reliably than prediction. Ultimately, operational maturity determines impact.

Conclusion

AI in mental health clinical decision support functions as a signal structuring layer rather than a replacement for clinical judgment. It can organize complex records, surface risk indicators, and reveal longitudinal patterns, while clinicians retain responsibility for interpreting those signals within context.

The complexity of mental health data, including variable documentation, evolving diagnoses, and cultural differences in symptom expression, limits the feasibility of full automation. Model performance alone does not resolve these structural constraints.

Long-term value depends on responsible deployment. Clear scope definition, careful validation, human oversight, and data governance determine whether AI becomes reliable decision support or remains experimental. In mental health care, implementation discipline ultimately defines real-world value.

Omdena helps organizations implement AI for mental health clinical decision support through structured design, careful validation, and responsible deployment. Organizations exploring AI in mental health can connect with Omdena to translate AI capabilities into trusted clinical decision support.