Best Practices for Governing Agentic AI Systems in 2026

Explore best practices for governing agentic AI systems to reduce risks, improve accountability, and safely scale autonomous AI in real-world applications.

Agentic AI is quickly becoming the next step in how AI systems work. Instead of just responding to prompts, these systems can plan, make decisions, and complete multi-step tasks on their own. Traditional AI is mostly reactive. It waits for instructions. Agentic AI, on the other hand, takes initiative and works toward a goal.

This shift brings real benefits. But it also creates new risks. When systems act with more autonomy, mistakes can scale faster. Unintended actions, misuse, and unclear accountability become serious concerns.

As more companies start adopting these systems, governance is no longer optional. It is essential.

To safely scale agentic AI, organizations must adopt structured governance practices across the AI lifecycle. In this article, I’ll break down the key risks, challenges, and practical practices needed to govern agentic AI systems effectively.

TL;DR (Quick Summary):

- Agentic AI systems are autonomous, goal-driven systems that can plan, decide, and act with minimal human input.

- More autonomy introduces risks like unintended actions, misalignment, security vulnerabilities, and bias.

- Governance is critical due to accountability gaps across developers, deployers, and users.

- Core governance practices include: evaluating task suitability, constraining actions, designing safe defaults, ensuring transparency, monitoring systems, assigning accountability, and maintaining human control.

- Advanced governance requires lifecycle-based controls, policy-as-code, and preparation for multi-agent systems.

- System-level risks include adoption pressure, job displacement, cybersecurity threats, and correlated failures.

- Practical framework: define use cases, set boundaries, monitor systems, assign ownership, and prepare incident response plans.

- Bottom line: Strong governance is essential to safely scale agentic AI and build trust while minimizing risk.

What Are Agentic AI Systems?

Agentic AI systems are goal-driven AI systems that can plan, act, and adapt with minimal human supervision. Instead of just responding to inputs, they actively pursue objectives and make decisions on their own.

These systems are defined by a few key characteristics:

- Goal complexity: They can handle multi-step, sophisticated objectives

- Environmental complexity: They operate across dynamic and changing environments

- Adaptability: They adjust to new or unexpected situations in real time

- Independent execution: They complete tasks with limited human intervention

You can already see this in action. Autonomous assistants can plan trips or manage workflows. In healthcare and finance, AI agents analyze data, generate insights, and take actions in real time.

These capabilities unlock massive value. But they also introduce unique governance challenges, which makes it critical to understand why governing agentic AI is no longer optional.

Why Governance of Agentic AI Is Critical

Agentic AI systems introduce a new class of risks because they can act independently, make decisions, and interact with real-world systems. Unlike traditional AI, their autonomy allows mistakes to scale quickly and unpredictably.

Unique Risks Introduced by Agentic AI

Some of the most critical risks include:

- Unintended actions such as incorrect financial decisions or system changes

- Misalignment with user intent, where the system pursues the wrong goal

- Security vulnerabilities and misuse, including exploits through APIs or integrations

- Bias and ethical dilemmas, especially in high-stakes decisions

These risks arise because autonomous systems can operate without continuous oversight, increasing the chance of harm.

The Accountability Problem

Governance becomes even harder due to multiple stakeholders involved:

- Model developers

- System deployers

- Users

This creates diffused responsibility, where no single party is fully accountable. As autonomy increases, traditional accountability frameworks struggle to keep up.

Real-World Implications

Without proper governance, these risks translate into real consequences:

- Financial losses from incorrect actions

- Compliance and regulatory risks

- Reputational damage for organizations

- Systemic risks from large-scale or correlated failures

As agentic AI adoption accelerates, governance shifts from a technical concern to a business-critical priority.

To address these challenges, organizations must implement structured and practical governance practices, which we’ll explore next.

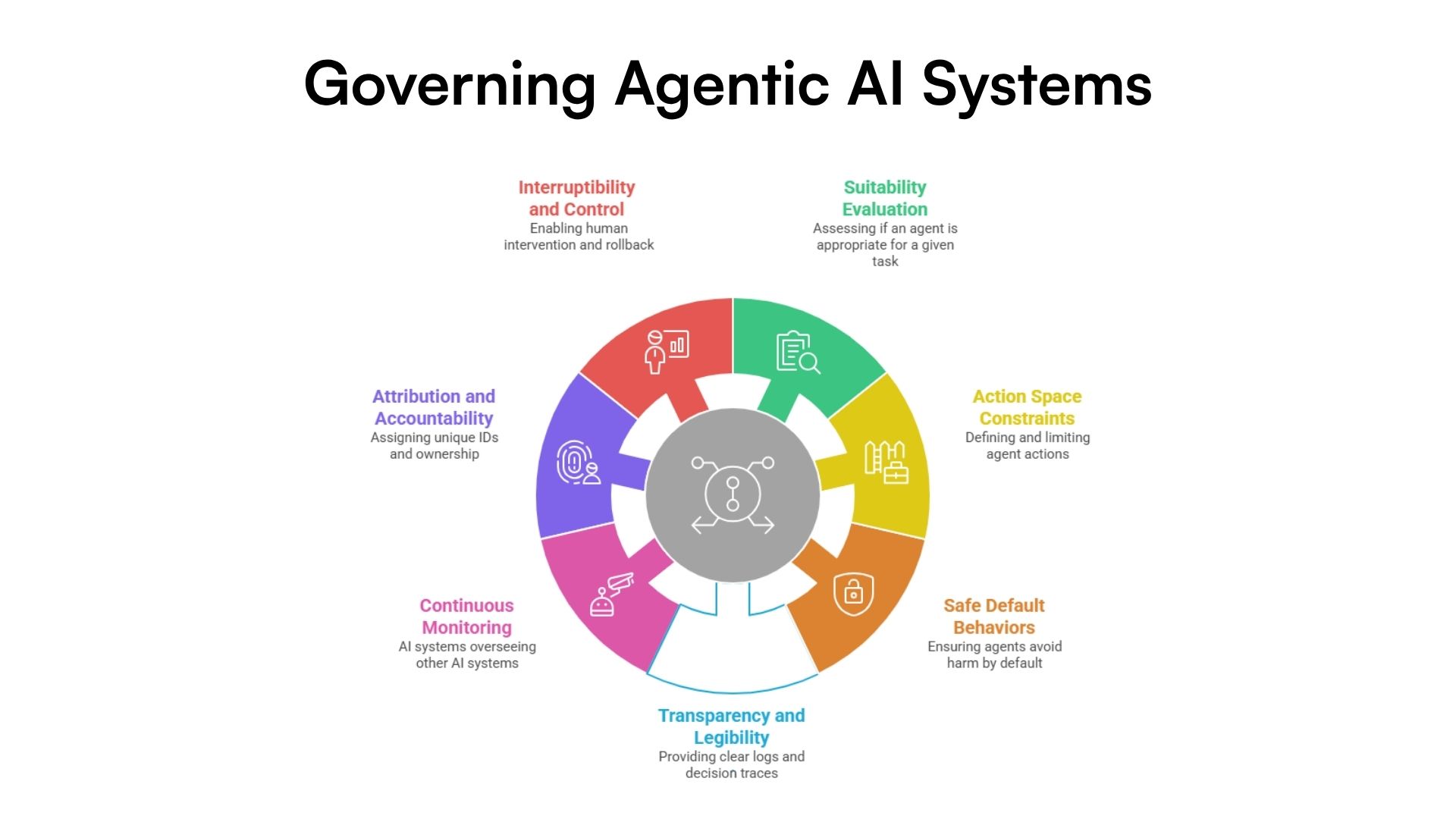

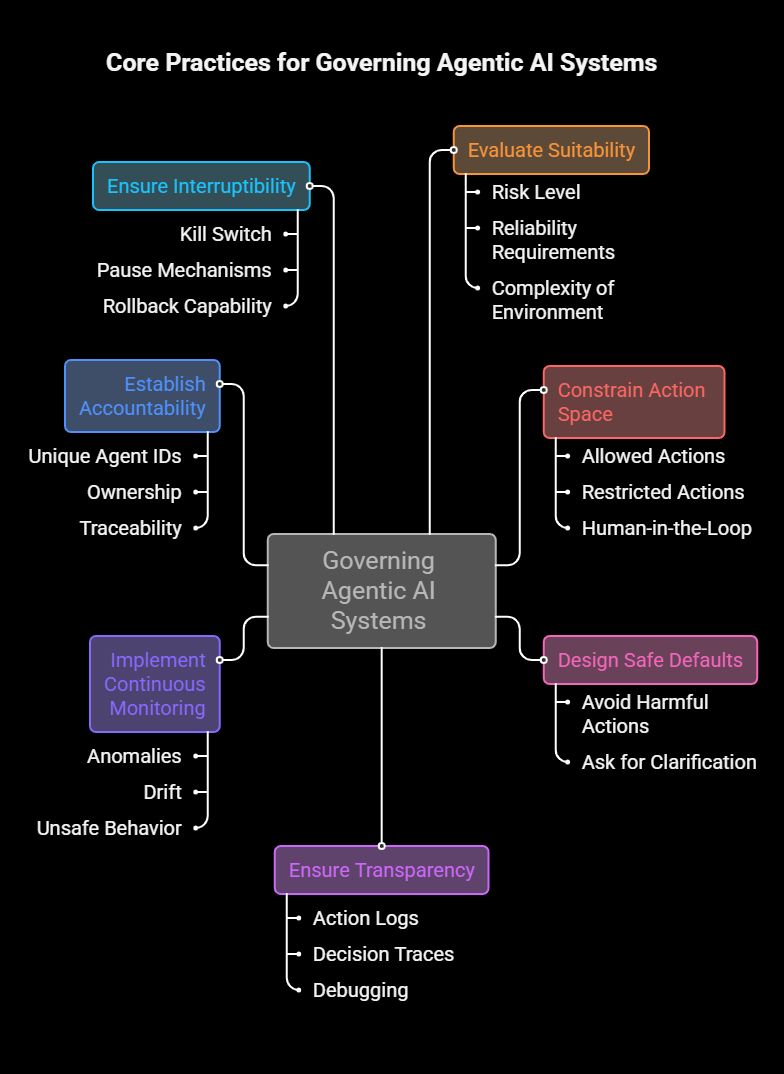

Core Practices for Governing Agentic AI Systems

Governing agentic AI systems requires more than high-level principles. It needs clear, practical controls that work across real-world deployments. The following practices form a strong foundation for building safe, reliable, and accountable agentic systems.

Core Practices for Governing Agentic AI Systems

1. Evaluate Suitability for the Task

Not every problem requires an autonomous agent. In many cases, a simpler AI system or even a rule-based workflow may be more reliable and easier to control.

Before deploying an agentic system, it is important to assess:

- The risk level of the task

- The required reliability and accuracy

- The complexity of the environment in which the agent will operate

For example, using an agent to automate internal reporting may be low risk. Using it to execute financial transactions or medical decisions is far more sensitive.

A key best practice is to test systems end-to-end under realistic conditions. This includes edge cases, failure scenarios, and long sequences of actions. Many failures only appear when agents operate over time, not in isolated test cases. Strong evaluation helps teams decide whether autonomy is appropriate in the first place.

2. Constrain the Agent’s Action Space

Once an agent is deployed, its capabilities must be clearly bounded. Without constraints, even well-designed systems can take harmful or unintended actions.

This starts with defining:

- What the agent is allowed to do

- What it is not allowed to do

These rules should be enforced through system design, not just instructions.

In practice, this often includes human-in-the-loop mechanisms. High-risk actions such as payments, contract approvals, or system changes should require explicit human confirmation. Threshold-based controls can also help. For instance, an agent may be allowed to make purchases below a certain value but must request approval above that limit.

The goal is to reduce the blast radius of potential errors while still preserving useful autonomy.

3. Design Safe Default Behaviors

Even with clear instructions, users rarely specify every detail of what they want. Agentic systems must fill in these gaps. This is where default behavior becomes critical.

Agents should be designed to:

- Avoid actions that are costly, irreversible, or risky

- Ask for clarification when instructions are unclear

- Prefer safer, lower-impact options when multiple paths exist

A simple guiding principle works well here:

When in doubt, do less harm.

For example, if an agent is unsure about making a purchase, it should pause and ask the user instead of proceeding. If multiple solutions exist, it should choose the least disruptive one.

Designing these defaults carefully can prevent a large number of real-world failures.

4. Ensure Transparency and Legibility

For users to trust and control agentic systems, they need visibility into what the system is doing and why.

This requires making agent activity legible through:

- Clear action logs that show what the agent did

- Decision traces or summaries that explain why it acted

This transparency supports several important outcomes. It helps teams debug issues, allows users to catch mistakes early, and creates an audit trail for compliance and governance.

However, there is a trade-off. As agentic systems become more advanced, their reasoning can become complex and harder to interpret. Long or highly technical decision traces may overwhelm users.

To address this, systems should present simplified explanations while still preserving detailed logs for deeper inspection when needed.

5. Implement Continuous Monitoring

In practice, humans cannot review every action taken by an agent. This is where automated monitoring becomes essential.

Many modern systems use AI to monitor other AI systems. These monitoring layers can analyze behavior in real time and detect:

- Anomalies or unexpected actions

- Performance drift over time

- Unsafe or policy-violating behavior

This approach allows organizations to scale oversight without slowing down operations.

At the same time, monitoring introduces trade-offs. It increases operational costs, especially if additional models are used. It also raises privacy concerns, since monitoring often requires access to user data and system activity.

Balancing effective oversight with cost and privacy is a key challenge in production systems.

6. Establish Attribution and Accountability

When something goes wrong, it must be possible to trace what happened and who is responsible. Without this, governance breaks down.

This is why attribution is critical. Each agent instance should have:

- A unique identifier

- A clearly defined owner, whether an individual or organization

This enables full traceability. Teams can track which agent performed an action, under what conditions, and on whose behalf.

In high-stakes scenarios such as financial services, healthcare, or enterprise automation, this level of accountability is essential. It supports auditing, compliance, and legal responsibility.

It also creates the right incentives. When responsibility is clear, stakeholders are more likely to design and operate systems carefully.

7. Ensure Interruptibility and Human Control

No matter how capable an agent becomes, humans must retain the ability to intervene.

This includes:

- A kill switch or pause mechanism to stop the system immediately

- The ability to roll back actions when possible

- Clear escalation paths when something goes wrong

Interruptibility is especially important in scenarios where agents operate continuously or interact with critical systems. Without it, small issues can quickly escalate into large failures.

For example, if an agent begins executing incorrect transactions or spreading incorrect information, teams must be able to stop it without delay.

This aligns with core safety principles in system design. Autonomy should never come at the cost of control.

These practices work best when implemented together. Each one addresses a different layer of risk, from prevention to detection to response. Combined, they create a defense-in-depth approach that makes agentic systems safer and more reliable.

However, as these systems evolve, governance must also go beyond these core practices. In the next section, I’ll explore more advanced considerations that organizations should start preparing for as agentic AI continues to scale.

Advanced Governance Considerations

As agentic AI systems scale, governance needs to evolve beyond basic controls. Organizations are now moving toward more embedded, lifecycle-driven, and automated approaches to keep up with increasing autonomy and complexity.

Lifecycle-Based Governance

Governance can no longer be a one-time checklist. It must operate across the entire lifecycle of an agentic system:

- Development: Designing safe architectures, defining constraints, and testing risks

- Deployment: Setting permissions, monitoring behavior, and enforcing controls

- Usage: Continuously auditing actions, updating policies, and managing real-world impact

Recent research highlights that agentic systems behave like “living systems,” which means governance must also be continuous and adaptive rather than static.

Policy-as-Code and Automation

Manual governance does not scale with autonomous systems. This is where policy-as-code becomes important.

Instead of relying on documentation, organizations encode rules directly into systems. These policies automatically control what agents can and cannot do. More advanced approaches even allow policies to adapt dynamically based on system behavior and risk signals.

This makes governance enforceable, consistent, and scalable across environments.

Emerging Approaches

New approaches are also shaping the future of agentic AI governance. Blockchain-based systems are being explored to create tamper-proof logs of agent actions and enforce rules through smart contracts.

At the same time, multi-agent systems introduce new challenges. When multiple agents interact, risks can compound, making coordination, monitoring, and accountability much harder to manage.

These trends show that governance must evolve alongside the technology. In the next section, I’ll explore the broader risks that emerge at a system and societal level as agentic AI adoption grows.

Indirect and System-Level Risks

Beyond direct failures, agentic AI introduces broader system-level risks. As adoption accelerates, many organizations prioritize speed over safety, leading to weaker governance and overlooked safeguards.

Agentic systems are also reshaping the workforce. Automation is expanding into knowledge work, raising concerns about job displacement and changing skill requirements. At the same time, cybersecurity dynamics are shifting, with AI acting as both a defense tool and an attack vector.

Another key risk is correlated failure. When multiple systems rely on similar models or infrastructure, a single flaw can spread widely.

These challenges highlight the need for industry-wide standards and regulations. Next, I’ll outline a practical governance framework.

Building a Practical Governance Framework

To move from theory to execution, organizations need a structured and actionable governance framework that can scale with agentic AI systems. Modern frameworks focus on combining risk management, control mechanisms, and continuous oversight.

Here’s a simple, practical approach:

- Define use cases and risk levels: Identify where agents will be used and assess their potential impact. High-risk use cases require stricter controls and oversight.

- Set boundaries and autonomy levels: Clearly define what agents can and cannot do. Limit permissions and apply least-privilege access to reduce unintended actions.

- Implement monitoring and logging: Track agent actions, decisions, and system interactions in real time to ensure transparency and auditability.

- Assign ownership and accountability: Define clear responsibilities across teams to avoid governance gaps and ensure traceability.

- Prepare incident response mechanisms: Establish rollback plans, escalation paths, and fail-safe controls to handle unexpected failures.

This framework helps organizations operationalize governance and scale agentic AI systems safely and responsibly.

The Future of Agentic AI Depends on Governance

Agentic AI is powerful. It can automate complex work, improve efficiency, and unlock new capabilities across industries. But with that power comes real risk. As systems become more autonomous, failures can scale faster and accountability becomes harder to manage.

That is why governance is not optional. It is foundational.

Organizations that invest in strong governance early will be better positioned to build trust, operate safely, and adapt as the technology evolves. They will also gain a clear competitive advantage by scaling agentic systems with confidence, not uncertainty.