Small Language Models Are the Future of Agentic AI

Discover why small language models are the future of agentic AI and how SLM-first systems outperform LLMs in real workflows.

Most AI agents today are inefficient by design. Even though adoption is growing fast, many systems rely on large language models for every step. This leads to higher costs, slower responses, and sometimes inconsistent outputs. As these agents scale, these issues quickly become major bottlenecks. In fact, recent research shows that more than half of large enterprises are already using AI agents, and adoption continues to rise.

The real problem isn’t model capability—it’s system design. Agentic AI isn’t about solving complex problems every time. It’s mostly about executing structured, repeatable tasks efficiently. Yet most systems treat every step as if it needs maximum LLM intelligence.

This is where things are changing. Small Language Models (SLMs) offer a more efficient foundation for building scalable, reliable agents. In this article, I’ll explain why SLMs are becoming central to agentic AI, how they outperform larger models in real workflows, and what this means for designing better AI systems.

TL;DR (Quick Summary):

- Most AI agents are inefficient because they rely on large language models (LLMs) for every step.

- Agentic AI is mainly about executing structured, repeatable tasks—not solving complex problems every time.

- Small Language Models (SLMs) are faster, cheaper, and more reliable for these workflows.

- SLM-first architectures use small models for most tasks and LLMs only for complex reasoning.

- This hybrid approach reduces cost, improves speed, and makes AI agents more scalable.

- The future of agentic AI lies in using the right model for the right task—not the biggest model.

What Are Small Language Models (SLMs)?

Small Language Models (SLMs) are compact AI models designed to handle language tasks efficiently without the heavy compute of large models. While there’s no strict definition, they typically fall in the range of under ~10 billion parameters (often 3B–10B) and are optimized to run on limited hardware like a single GPU or even edge devices.

What makes them powerful isn’t size—it’s focus. SLMs are built to be fast, specialized, and easy to deploy locally, making them ideal for real-world workflows.

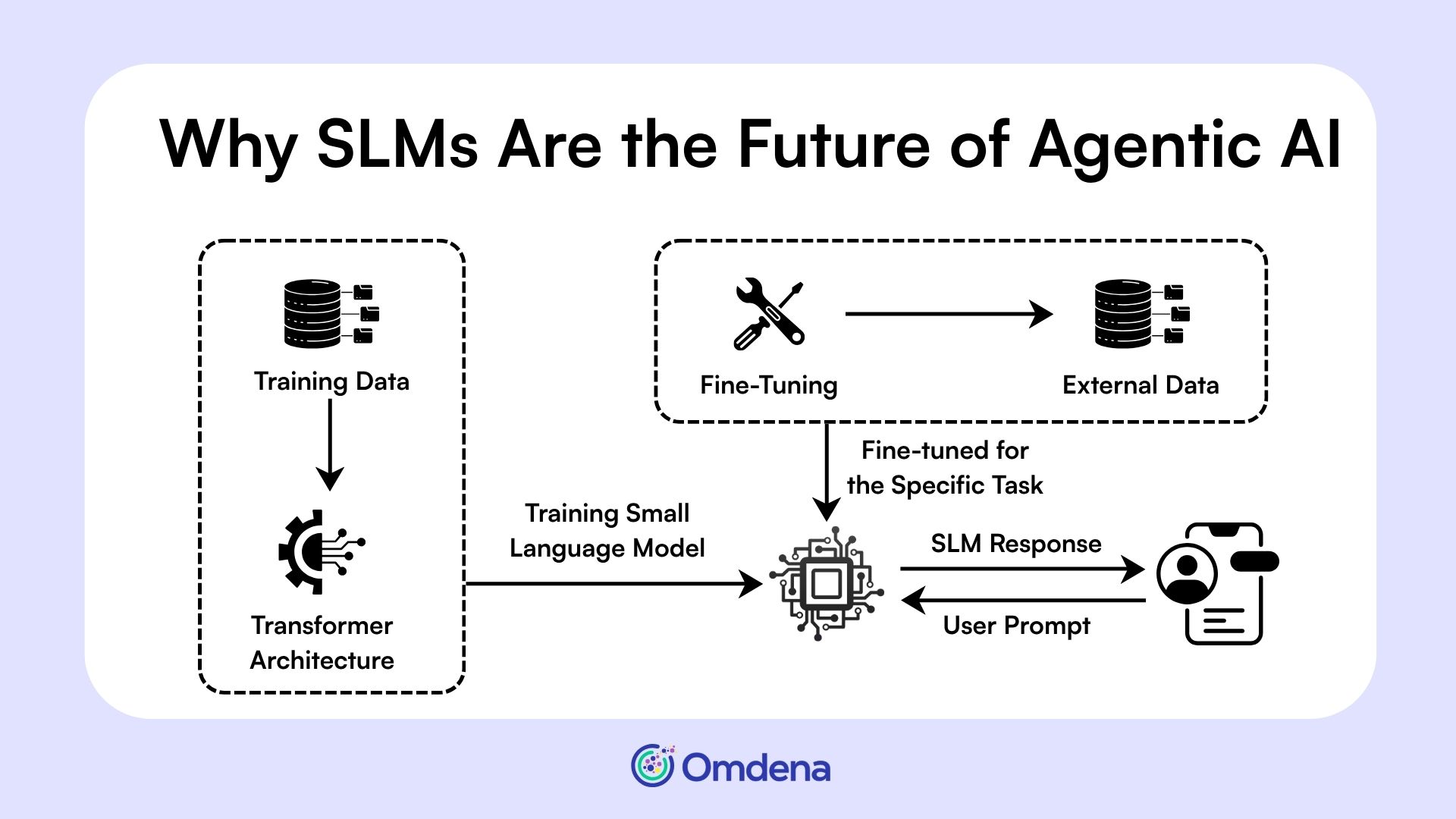

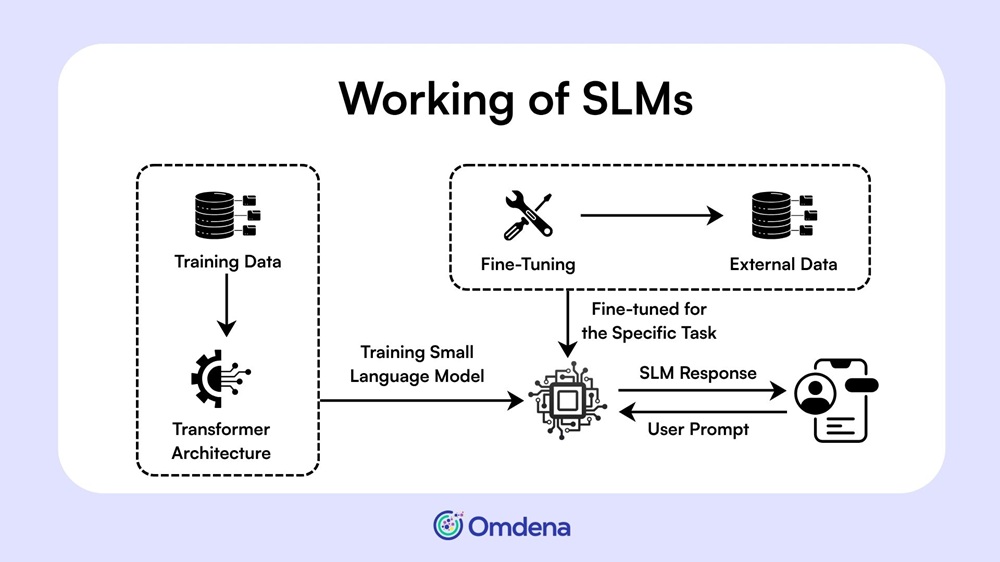

Working of SLMs

In simple terms:

- LLM = generalist

- SLM = specialist

And that distinction matters. Because once AI systems move from single prompts to multi-step workflows, relying only on general-purpose large language models starts to break down.

Why LLM-Centric Agent Design Breaks at Scale

Agentic AI systems are not single prompts—they are multi-step workflows. A typical agent breaks a task into smaller steps: understanding input, calling APIs, processing data, and generating outputs. The problem? Many systems rely on large language models (LLMs) for every single step.

This creates compounding inefficiencies:

- Costs multiply quickly as each step triggers another expensive LLM call

- Latency increases, slowing down real-time workflows

- Outputs become inconsistent, especially in structured tasks

Research shows that most agent workloads involve repetitive, low-variation tasks, yet they are still handled by general-purpose models. In many cases, 40–70% of these tasks could be handled by smaller models instead.

The result is a system that is powerful—but inefficient at scale.

Agentic AI fails not because models are weak, but because systems are poorly designed.

This realization leads to a fundamental shift: instead of scaling model size, we need to rethink how models are used—which is where small language models come in.

Why Small Language Models Are Replacing LLMs at the Core of Agentic AI

As AI shifts from chatbots to autonomous agents, the focus moves from generating text to executing tasks efficiently at scale. Small Language Models (SLMs) enable this by prioritizing speed, cost, and reliability over raw intelligence.

Built for Repetitive, Structured Tasks

Most agent tasks are predictable: parsing inputs, calling APIs, formatting outputs, or routing decisions. These tasks don’t require broad world knowledge. They require consistency. Research shows that agentic workflows involve specialized, repetitive operations with little variation, making them ideal for SLMs. Instead of overusing general-purpose models, SLMs handle these tasks more efficiently by design.

Speed + Cost Efficiency at Scale

SLMs dramatically reduce operational overhead. Studies show they can be 10–30× more cost-efficient than large models for structured tasks. They also deliver faster inference, enabling real-time responses. This makes them far more practical for systems where agents run continuously across workflows.

Better Control and Reliability

In agentic systems, outputs must follow strict formats (JSON, APIs, schemas). Fine-tuned SLMs are better at adhering to these constraints. They produce more predictable outputs with fewer hallucinations, which is critical for automation-heavy environments.

Deployability (Edge + Private Infrastructure)

Unlike LLMs, SLMs can run on local machines, private clouds, or edge devices. This improves privacy, reduces dependency on external APIs, and enables scalable deployment across organizations.

SLMs don’t outperform LLMs in intelligence—they outperform them in usefulness.

This shift naturally leads to a new way of designing AI systems—where SLMs form the core and larger models play a supporting role.

SLM-First Architectures: How Modern AI Agents Should Be Designed

Most early agentic systems follow a simple but flawed design: one large language model (LLM) handles everything. From understanding inputs to calling tools and generating outputs, the same model is used across the entire workflow. This “one-model-does-all” approach creates unnecessary cost, latency, and complexity.

Modern systems take a different path. Research increasingly points to SLM-first, heterogeneous architectures as the more efficient design pattern.

In modern AI agents:

- SLM handles ~80% of tasks (routing, formatting, tool calls)

- LLM is used only for complex reasoning or edge cases

This creates a simple execution flow:

Task → SLM → If complex → LLM → Execute

Because most agent tasks use only a narrow slice of model capability, smaller models can handle the bulk efficiently.

The future is not one powerful model, but many small coordinated ones.

This architectural shift is already visible in real-world systems—especially in how SLMs are being applied across practical workflows.

Real-World Use Cases Where SLMs Already Win

SLMs are already proving their value across real-world agentic workflows where speed, cost, and reliability matter most.

A. Workflow Automation

- Handling API calls, task routing, and data formatting

- Converting user input into structured outputs (JSON, queries)

- Automating repetitive backend processes with consistency

B. Enterprise Operations

- Finance: invoice processing, fraud checks

- Healthcare: summarization, data extraction

- Customer support: ticket routing and query handling

C. Developer Agents

- Generating boilerplate code and test cases

- Debugging logs and identifying issues

- Automating routine development tasks

These use cases highlight a clear pattern: SLMs excel where tasks are structured and repeatable. This naturally leads to a broader question—how do SLMs and LLMs work together in the future?

The Hybrid Future: SLMs + LLMs, Not SLM vs LLM

The future of agentic AI is not about choosing between SLMs and LLMs—it’s about combining them intelligently. Modern systems are increasingly adopting a hybrid approach, where each model plays a specific role based on task complexity.

LLMs still matter for:

- Complex reasoning and long-horizon planning

- Open-ended, ambiguous tasks

- Edge cases that require broad knowledge

SLMs, on the other hand, power the execution layer:

- Task routing, API orchestration, structured outputs

- Repetitive, high-frequency workflows

- Real-time, cost-sensitive operations

This hybrid design allows systems to stay efficient without losing capability. Instead of overusing large models, agents route tasks intelligently across models.

SLMs will handle the bulk of agent work, LLMs will handle the edge cases.

Challenges and Limitations of SLMs

While SLMs offer clear advantages, they are not without limitations. Understanding these trade-offs is important for designing balanced agentic systems.

- Limited general reasoning: SLMs struggle with complex, open-ended problems that require deep context or broad world knowledge. They are best suited for narrow, well-defined tasks.

- Requires orchestration: Using multiple SLMs across workflows introduces system complexity. Teams need proper routing, monitoring, and coordination layers to make them work effectively.

- Ecosystem still LLM-first: Most tools, benchmarks, and infrastructure are built around large models, making adoption and integration of SLMs slower in practice.

These challenges highlight why a hybrid approach remains essential.

Ready to Build SLM-First AI Agents? Omdena Can Help

Agentic AI is entering a new phase. The focus is no longer on building bigger models, but on designing smarter systems that can operate efficiently at scale. As we’ve seen, most real-world agent workflows don’t require maximum intelligence at every step—they require speed, reliability, and cost control.

This is why Small Language Models (SLMs) are becoming the foundation of modern agentic systems. Combined with LLMs in a hybrid setup, they enable a more practical, scalable approach to automation.

The future of agentic AI will not be defined by model size, but by how intelligently models are used.

If you’re exploring how to build SLM-first AI agents for your use case, I recommend booking an exploration call with Omdena to get started.